For the past decade, "move everything to the cloud" was the dominant mantra of enterprise IT. But a quiet revolution is underway — and it's pushing computing power back to the edges of the network.

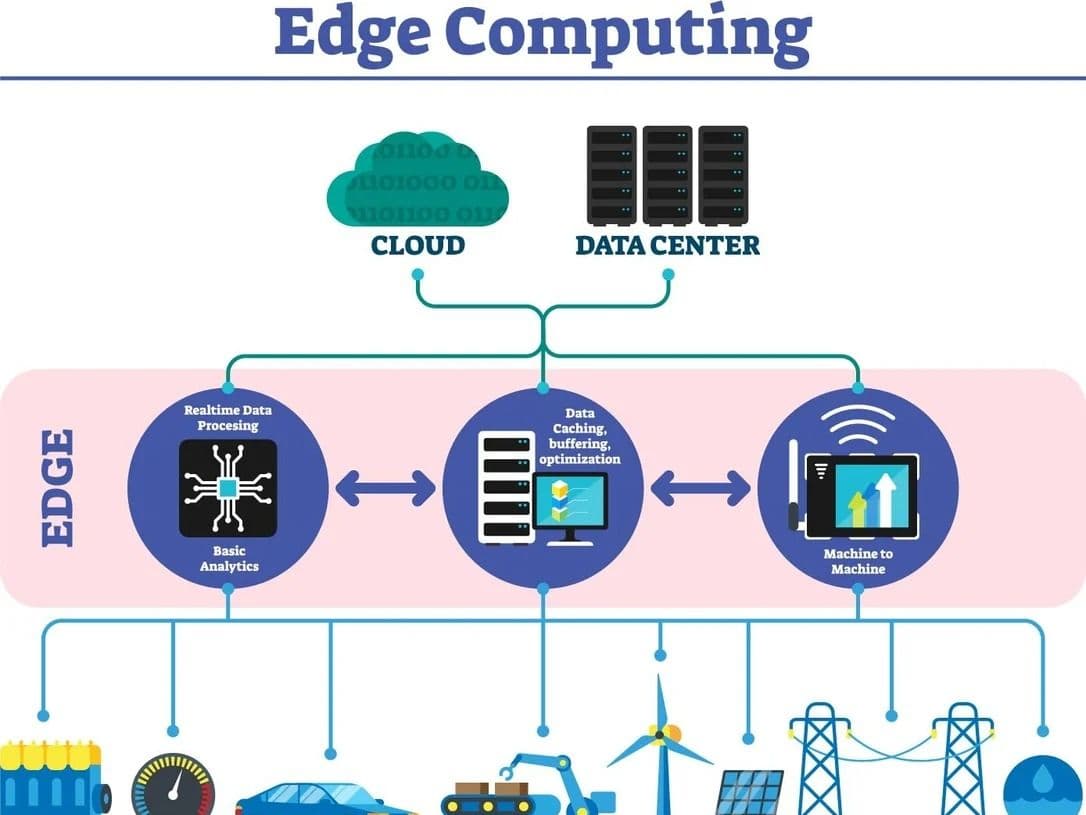

Edge computing refers to processing data closer to where it's generated — on devices, local servers, or regional hubs — rather than sending it all the way to a centralized cloud data center. The result? Faster response times, lower bandwidth costs, and greater reliability in areas with spotty internet connectivity.

Why Now?

The explosion of IoT (Internet of Things) devices has been a major catalyst. From smart factory floors to autonomous vehicles, modern systems generate enormous volumes of data that simply can't afford the latency of a round-trip to the cloud. A self-driving car making a split-second braking decision can't wait 200 milliseconds for a server in another city to respond.

Telecom companies rolling out 5G networks have also accelerated adoption. With 5G's ultra-low latency, edge nodes placed near cell towers can process data in near real-time — opening up possibilities for augmented reality, remote surgery, and industrial automation.

Challenges Ahead

Edge computing isn't without its hurdles. Managing thousands of distributed nodes is far more complex than maintaining a handful of centralized data centers. Security is another concern — each edge node is a potential attack surface. Companies investing in this space are working hard to develop standardized management platforms and zero-trust security frameworks.

Despite the challenges, analysts predict the global edge computing market will continue growing rapidly through the late 2020s. For businesses that depend on real-time data, the edge isn't just an option — it's becoming a necessity.